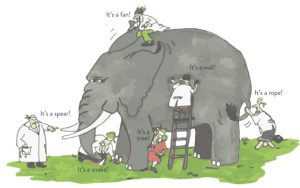

The Rashomon Effect: how different, limited views can lead to different conclusions

CNS 2026 guest post by Mohith (Mo) M. Varma

Sitting through the four talks at the “Mapping Emotions in the Brain” symposium at the CNS 2026 annual meeting, I couldn’t shake the feeling I was watching the scientific equivalent of Rashomon, Akira Kurosawa’s 1950 masterpiece where four witnesses give different accounts of the same pivotal event (no spoilers!). That film gave us a name for this kind of thing: the Rashomon Effect. At the CNS symposium, the matter at the center of it all was a deceptively simple question: Can we locate our emotions in the brain? Four researchers, using some of the most sophisticated machine learning tools in modern neuroscience, offered different answers. Rashomon Effect, at its finest, in a conference room!

As you might expect, I left the symposium without a clear answer. But in hindsight, I have come to think that was precisely the point of the symposium and, in fact, more valuable than any tidy conclusion would have been. It motivated me to dig deeper into the competing ideas discussed at the symposium, reflect on their philosophical underpinnings, and work out where I, a budding emotion researcher, actually stand.

Four Researchers, Four Answers

Before getting into the four talks, it’s worth briefly pausing on the machine learning approach that underpinned all of them. The core logic, in broad strokes, is to train a model that can detect reliable patterns in the brain signals and classify those patterns well enough to predict what emotion a person was feeling based on the pattern of their brain activity. This is no small feat.

A modern fMRI scanner captures brain activity about once every second across roughly a million tiny units called voxels, each housing tens of thousands of neurons. That is staggeringly large data just from a single brain scan and multiply that times the number of participants in that study and the amount of time they spent inside the scanner:The data is simply far too rich and complex to sift through manually. That’s where machine learning algorithms can help, by detecting reliable patterns buried in that massive amount of data.

To map brain activity onto emotions using this approach, participants view emotionally charged material, such as short film clips, while lying inside the scanner. The assumption is that these clips can evoke specific emotions, though what that actually means is worth unpacking, and I will come back to it in the next section. Researchers in these studies typically use multiple different clips per emotion rather than repeating the same one, which both boosts the signal-to-noise ratio in the brain data and avoids a real practical problem: a clip losing its emotional punch due to repetition. The clips themselves can look quite different across studies.

For instance, in a study discussed by Ajay Satpute of UCLA at the symposium, researchers used short first-person footage of fear-inducing scenarios (e.g. walking along the edge of a cliff), while some of the other studies used more varied set of clips, mostly drawn from movies, to induce a specific emotion. Researchers validate whether their chosen clips can successfully induce a specific emotion by asking participants what they felt and how intensely; oftentimes, clips are prevalidated before use in a study.

For instance, in a study discussed by Ajay Satpute of UCLA at the symposium, researchers used short first-person footage of fear-inducing scenarios (e.g. walking along the edge of a cliff), while some of the other studies used more varied set of clips, mostly drawn from movies, to induce a specific emotion. Researchers validate whether their chosen clips can successfully induce a specific emotion by asking participants what they felt and how intensely; oftentimes, clips are prevalidated before use in a study.

At the symposium, Kevin LaBar of Duke University (pictured at right/above) presented a study that really evoked a sense of awe in me due its sheer scale. His team scanned 136 participants (if you know anything about brain imaging research is a lot, especially for a single study, in terms of time, effort, and the incredible cost) while they viewed pre-validated film clips and emotion-evoking text. What their machine learning algorithms could do was pretty neat: Specific patterns of brain activity, distributed across the whole brain, could reliably predict both the emotion category of what participants were watching and what they actually reported feeling.

The studies that Heini Saarimäki of Tampere University in Finland (pictured at left/above) discussed followed a similar approach, but with an additional dimension. In at least one of them, her team didn’t just link brain activity to the emotion category of the pre-validated materials or the emotion participants reported. They also looked at self-reported interoceptive awareness, how aware people are of their own bodily signals like breathing or heart rate. Heini’s team found that while brain activity for specific self-reported emotions showed up in a broad and distributed pattern across the whole brain, self-reported interoceptive awareness mapped onto a more specific brain region, namely the secondary somatosensory cortex.

The studies that Heini Saarimäki of Tampere University in Finland (pictured at left/above) discussed followed a similar approach, but with an additional dimension. In at least one of them, her team didn’t just link brain activity to the emotion category of the pre-validated materials or the emotion participants reported. They also looked at self-reported interoceptive awareness, how aware people are of their own bodily signals like breathing or heart rate. Heini’s team found that while brain activity for specific self-reported emotions showed up in a broad and distributed pattern across the whole brain, self-reported interoceptive awareness mapped onto a more specific brain region, namely the secondary somatosensory cortex.

Patrik Vuilleumier of the University of Geneva pushed things further. His evidence suggested that the broad, distributed brain activity patterns evoked by those pre-validated emotion-evoking film clips and text seem to capture richer, more layered information, including how novel something is, how relevant it is, and its social significance.

Challenging these talks in true Rashomon fashion, however, was the presentation by Satpute. His team looked at brain activation patterns across three different types of fear-inducing clips: fear of heights, fear of spiders, and fear of social threat like encountering an aggressive cop. Given all these clips induce self-reported fear, you would expect the brain to respond similarly across all three types of clips. But it didn’t. The brain activation patterns showed very little overlap between the three contexts. It made me wonder: When researchers pool together clips all labelled “fear” and find a distributed but consistent brain pattern, are they actually mapping the emotion of fear, or just the particular cocktail of cognitive and interoceptive features that happen to show up in that specific type of fearful situations?

This all points toward a picture where an emotional experience isn’t just one thing happening in one place within the brain. The distributed brain activity pattern evoked by a “fear” clip, for instance, seems to be not purely reflecting fear. It also captures other features that get co-engaged in that moment: how novel the stimulus feels, how your body is responding, etc. In other words, what the algorithm labels as “fear” in the brain is really a rich cocktail of cognitive and interoceptive ingredients. Whether those are the necessary ingredients that make fear “fear”, or just fellow travelers along for the ride, is a whole other fascinating debate!

Wait… what exactly are we trying to locate in the brain?

The obvious answer is: emotions, duh. It’s right there in the symposium title. But as some of the talks indicated, the brain activity evoked by those emotion-specific materials doesn’t just reflect the emotion itself. It also tracks things like novelty, which on the face of it has less to do with feeling afraid and more to do with determining whether the situation is similar to something experienced in the past or not. And that’s not necessarily a flaw in the method so much as a reflection of how emotions actually work.

Emotions don’t operate in isolation. They recruit memory (have I been in something like this before?), motor responses, and decision-making (what should I do next?). So when you see something like a “fear” clip lighting up much of the brain, as in the figure above, that snapshot isn’t capturing fear in isolation. It reflects a self-reported experience of fear, with many related yet non-emotional processes unfolding together.

There’s another layer worth flagging. All the pre-validated materials used in these studies, whether film clips, images, or text sentences, earn their emotion labels through self-reported ratings. Even putting aside how much subjective ratings vary from person to person, self-reports of emotion come with a deeper issue: They lack a clear ground truth. For most psychological processes, the stimulus has a defined reference point. If you are studying vision, you can verify whether someone actually saw a red circle. If you are studying memory, you can check whether they actually remembered the word. But with emotion, the same “fear-inducing” clip might genuinely scare one person and barely affect another.

These studies may be doing something we already do in everyday life i.e. inferring how someone feels from observable cues like their facial expressions. The difference is that, instead of behaviour, these studies are using patterns of brain activity.

Discounting such variability, even if we assume the pre-validated materials are consistently evoking their labelled emotions, what these machine learning models are really learning to predict from brain activity is the self-reported experience of an emotion. In that sense, these studies may be doing something we already do in everyday life i.e. inferring how someone feels from observable cues like their facial expressions. The difference is that, instead of behaviour, these studies are using patterns of brain activity.

From Snapshots to Mechanisms

Mapping which brain regions activate during an emotion is a genuine achievement. But for me, the more pressing question sits one step further: which of those regions actually matter? A widespread activation pattern tells you what lights up, not which brain region is doing the heavy lifting. Within that sprawling network, some regions are probably critical, meaning knock them out and the emotional experience changes fundamentally, while others may just be along for the ride reflecting the rich and multisensory nature of an emotional experience.

This is where I would love to see machine learning go next: not just predicting a self-reported emotional experience from brain activity, but explaining that prediction. Which brain region within the distributed pattern activates first and drives what comes after? Which regions are necessary rather than merely correlated? Establishing that kind of causal pathway would let us move from description to mechanism.

Emotions beyond the Present

There is one more gap I kept returning to throughout the symposium, and it was reassuring to hear it raised during the Q&A too: All of the studies covered in the symposium focused on emotions triggered by something right in front of you, a clip, an image, a sentence. But a huge portion of our emotional lives doesn’t work that way. For instance, we feel anxious about things that haven’t happened yet or from aversive reminders from the past. In fact, my own work presented at CNS this year looks at this form of emotions by asking: Does blunting negative emotions triggered by reminders help forget the unpleasant memories tied to those reminders?

Whether these internally generated emotions recruit the same critical brain regions as stimulus-driven ones is an open and important question. They may differ in intensity or in how they unfold over time, but if they share the same necessary neural architecture that would tell us something profound about what an emotional experience fundamentally is, regardless of what sets it off.

Meanwhile, seeing the Rashomon Effect across those talks, highlights that variation may not be a flaw to fix, but a feature of emotion worth taking seriously. The real and exciting challenge for our field is to build that heterogeneity into the models themselves, while pushing beyond prediction toward causal explanation of how those activity patterns emerge. Such a shift would let us take a step closer to meaningfully understanding the neural machinery that produces our emotions.

—

Mohith (Mo) M. Varma is a doctoral student at the MRC Cognition and Brain Sciences Unit, University of Cambridge. His research takes a functionalist approach to understanding what emotions do, focusing in particular on the neurocognitive mechanisms and consequences of emotional blunting, under the supervision of Michael C. Anderson.